---------------------------------------------------------------------------

BadRequestError Traceback (most recent call last)

Cell In[23], line 4

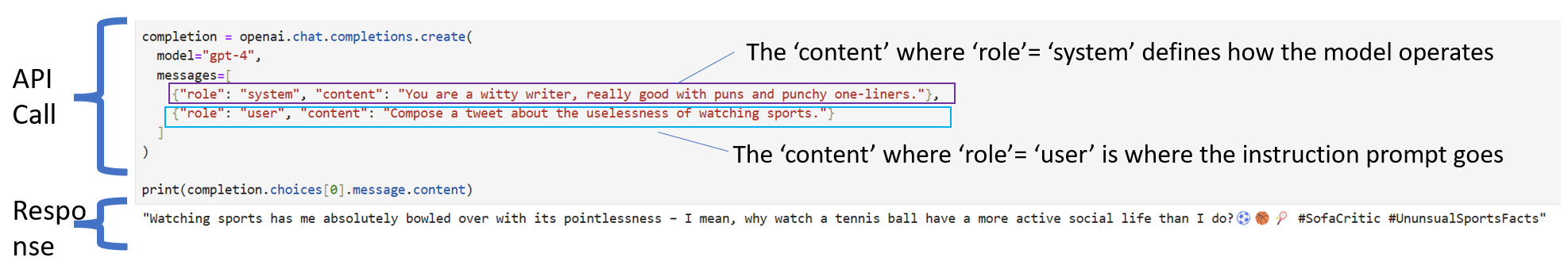

1 # Try with GPT-3.5

2 from openai import OpenAI

----> 4 completion = openai.chat.completions.create(

5 model="gpt-3.5-turbo",

6 messages=[

7 {"role": "system", "content": "You are a witty writer, really good with summarizing text. You will be provided text by the user that you need to summarize and present as the five key themes that underlie the text. Each theme should have a title, and its description not be longer than 15 words."},

8 {"role": "user", "content": text_to_summarize}

9 ]

10 )

12 print(completion.choices[0].message.content)

File ~\AppData\Local\Programs\Python\Python311\Lib\site-packages\openai\_utils\_utils.py:271, in required_args.<locals>.inner.<locals>.wrapper(*args, **kwargs)

269 msg = f"Missing required argument: {quote(missing[0])}"

270 raise TypeError(msg)

--> 271 return func(*args, **kwargs)

File ~\AppData\Local\Programs\Python\Python311\Lib\site-packages\openai\resources\chat\completions.py:659, in Completions.create(self, messages, model, frequency_penalty, function_call, functions, logit_bias, logprobs, max_tokens, n, presence_penalty, response_format, seed, stop, stream, temperature, tool_choice, tools, top_logprobs, top_p, user, extra_headers, extra_query, extra_body, timeout)

608 @required_args(["messages", "model"], ["messages", "model", "stream"])

609 def create(

610 self,

(...)

657 timeout: float | httpx.Timeout | None | NotGiven = NOT_GIVEN,

658 ) -> ChatCompletion | Stream[ChatCompletionChunk]:

--> 659 return self._post(

660 "/chat/completions",

661 body=maybe_transform(

662 {

663 "messages": messages,

664 "model": model,

665 "frequency_penalty": frequency_penalty,

666 "function_call": function_call,

667 "functions": functions,

668 "logit_bias": logit_bias,

669 "logprobs": logprobs,

670 "max_tokens": max_tokens,

671 "n": n,

672 "presence_penalty": presence_penalty,

673 "response_format": response_format,

674 "seed": seed,

675 "stop": stop,

676 "stream": stream,

677 "temperature": temperature,

678 "tool_choice": tool_choice,

679 "tools": tools,

680 "top_logprobs": top_logprobs,

681 "top_p": top_p,

682 "user": user,

683 },

684 completion_create_params.CompletionCreateParams,

685 ),

686 options=make_request_options(

687 extra_headers=extra_headers, extra_query=extra_query, extra_body=extra_body, timeout=timeout

688 ),

689 cast_to=ChatCompletion,

690 stream=stream or False,

691 stream_cls=Stream[ChatCompletionChunk],

692 )

File ~\AppData\Local\Programs\Python\Python311\Lib\site-packages\openai\_base_client.py:1200, in SyncAPIClient.post(self, path, cast_to, body, options, files, stream, stream_cls)

1186 def post(

1187 self,

1188 path: str,

(...)

1195 stream_cls: type[_StreamT] | None = None,

1196 ) -> ResponseT | _StreamT:

1197 opts = FinalRequestOptions.construct(

1198 method="post", url=path, json_data=body, files=to_httpx_files(files), **options

1199 )

-> 1200 return cast(ResponseT, self.request(cast_to, opts, stream=stream, stream_cls=stream_cls))

File ~\AppData\Local\Programs\Python\Python311\Lib\site-packages\openai\_base_client.py:889, in SyncAPIClient.request(self, cast_to, options, remaining_retries, stream, stream_cls)

880 def request(

881 self,

882 cast_to: Type[ResponseT],

(...)

887 stream_cls: type[_StreamT] | None = None,

888 ) -> ResponseT | _StreamT:

--> 889 return self._request(

890 cast_to=cast_to,

891 options=options,

892 stream=stream,

893 stream_cls=stream_cls,

894 remaining_retries=remaining_retries,

895 )

File ~\AppData\Local\Programs\Python\Python311\Lib\site-packages\openai\_base_client.py:980, in SyncAPIClient._request(self, cast_to, options, remaining_retries, stream, stream_cls)

977 err.response.read()

979 log.debug("Re-raising status error")

--> 980 raise self._make_status_error_from_response(err.response) from None

982 return self._process_response(

983 cast_to=cast_to,

984 options=options,

(...)

987 stream_cls=stream_cls,

988 )

BadRequestError: Error code: 400 - {'error': {'message': "This model's maximum context length is 16385 tokens. However, your messages resulted in 36800 tokens. Please reduce the length of the messages.", 'type': 'invalid_request_error', 'param': 'messages', 'code': 'context_length_exceeded'}}